Error!

Post not found

We can't find the internet

Attempting to reconnect

Something went wrong!

Hang in there while we get back on track

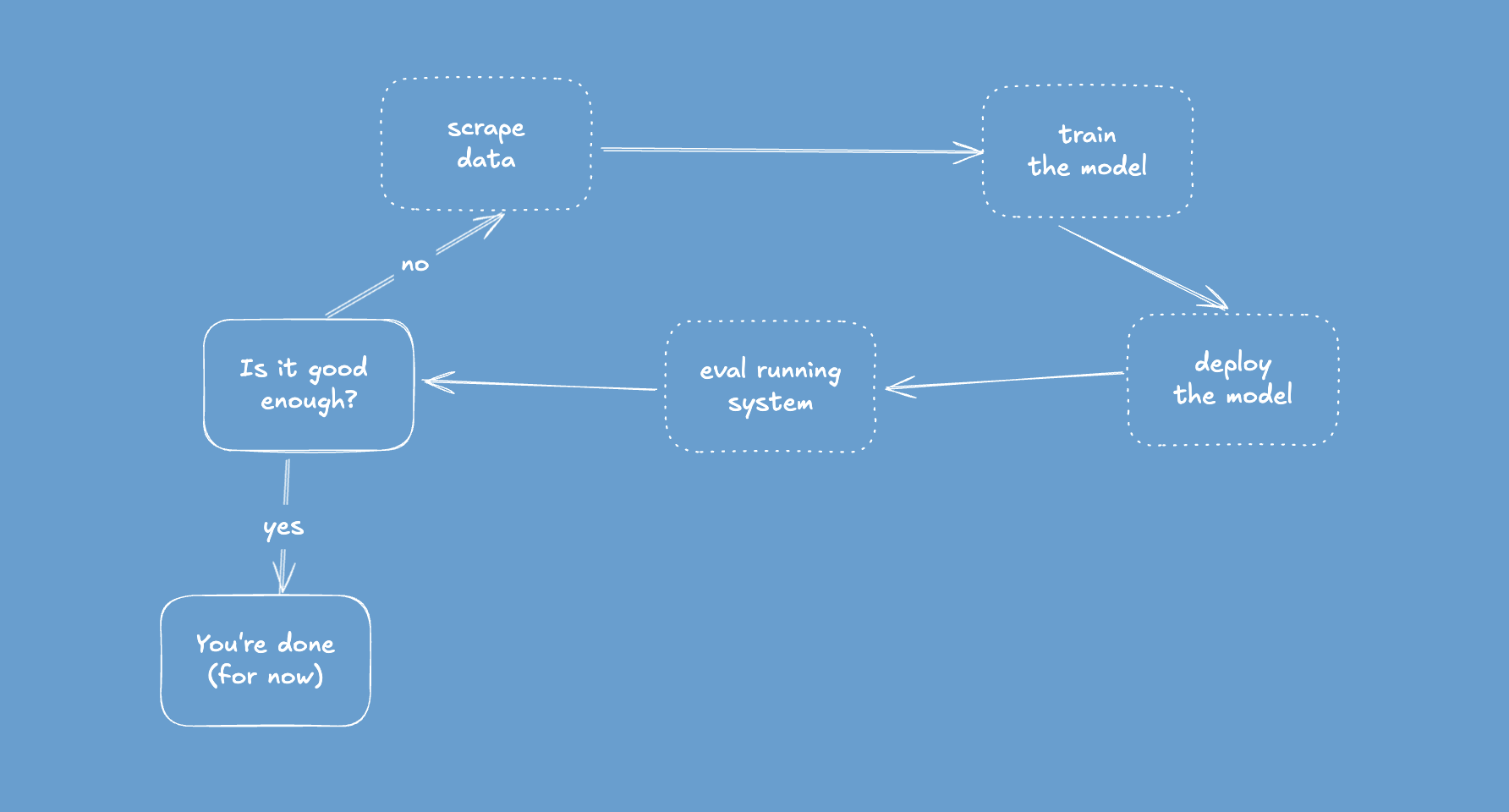

Close the loop

It’s hard to think more than a couple of steps ahead when you’re building something new.

Surprise, your data warehouse can RAG

Another post on the Rainforest blog, this time about how to do RAG in your existing data warehouse.

The importance of doing the exercises

You know the feeling of going through some materials and thinking “I got this, I understand everything”? And seeing the exercises at the end of the chapter and feeling they’re completely pointless because you understand everything already and it’s just a waste of time?

Building reliable systems out of unreliable agents

I wrote a post for the Rainforest blog about how you can build reliable systems out of the unreliable agents built with today’s LLMs. Check it out!

Deploy really hot code with habanero 🌶️

How can you dramatically decrease time-to-deploy? Wouldn’t it be cool if your CI/CD pipeline took very little time? Wouldn’t it be cool if it took zero time? Is that even possible?

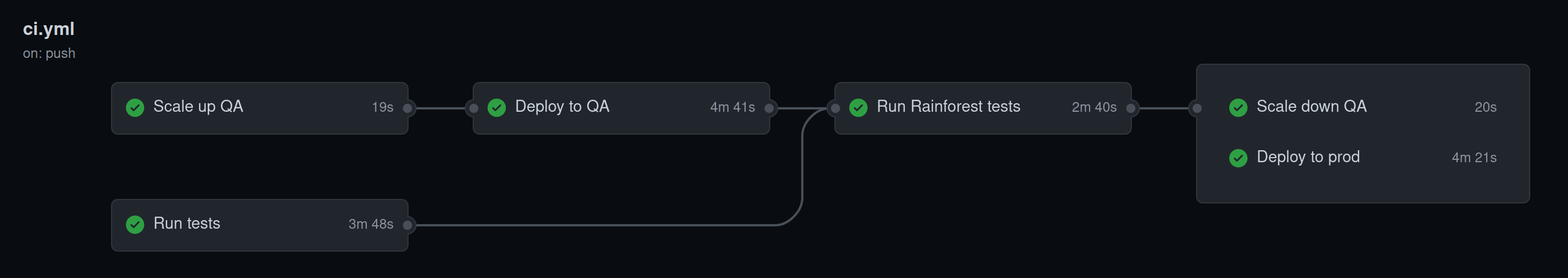

Deploying regex.help and CI/CD

Now that I’ve written up how regex.help was built, it’s time to focus on deployment. I used to think deployment was… not the most exciting part of building things. Howqever, recently I find it more and more interesting, probably because I care more about good CI/CD. Elixir and fly.io make it even cooler and shinier - let’s jump in!

Using Rustler with Elixir 1.12/OTP 24

Read this if you want to get rustler running on the new, shiny Elixir 1.12/OTP 24.

Building regex.help

Now that regex.help is functional and deployed (check it out if you’re writing regular expressions) I want to share how I built it. In the text post will cover deployment and CI/CD setup. In case you want to look at the code, head over to the GitHub repo.

Setting up Plausible, Hitting the Tracker Wall

After buiding regex.help I was curious about how much traffic it gets. fly provides some basic metrics, but that wasn’t enough to really know how many people find the site helpful.

regex.help

Next time you’re trying to write some regex, check out regex.help - it should make your task much easier. A couple of weeks ago, after Secretwords was finished, a colleague pointed me towards grex. It’s a really neat tool to help you write regex.